Later in the 19th century, the molecular theory became predominant, mostly due to Maxwell, Thomson and Ludwig Boltzmann, but we will cover that story later. It was Thomson who seems to have been the first to explicity recognize that this could not be the case, because it was inconsistent with the manner in which mechanical work could be converted into heat. Carnot & Clausius thought of heat as a kind of fluid, a conserved quantity that moved from one system to the other. At this time, the idea of a gas being made up of tiny molecules, and temperature representing their average kinetic energy, had not yet appeared. In equation 1, S is the entropy, Q is the heat content of the system, and T is the temperature of the system. The specific definition, which comes from Clausius, is as shown in equation 1 below. The concept was expanded upon by Maxwell (Theory of Heat, Longmans, Green & Co.

But it was Clausius who first explicitly advanced the idea of entropy (On Different Forms of the Fundamental Equations of the Mechanical Theory of Heat, 1865 The Mechanical Theory of Heat, 1867). My goal here is to shwo how entropy works, in all of these cases, not as some fuzzy, ill-defined concept, but rather as a clearly defined, mathematical & physical quantity, with well understood applications.Ĭlassical thermodynamics developed during the 19th century, its primary architects being Sadi Carnot, Rudolph Clausius, Benoit Claperyon, James Clerk Maxwell, and William Thomson (Lord Kelvin). It did not take long for Claude Shannon to borrow the Boltzmann-Gibbs formulation of entropy, for use in his own work, inventing much of what we now call information theory. But the advent of statistical mechanics in the late 1800's created a new look for entropy. There is no such thing as an "entropy", without an equation that defines it.Įntropy was born as a state variable in classical thermodynamics. Remember in your various travails, that entropy is what the equations define it to be. That means that entropy is not something that is fundamentally intuitive, but something that is fundamentally defined via an equation, via mathematics applied to physics. But it should be remembered that entropy, an idea born from classical thermodynamics, is a quantitative entity, and not a qualitative one.

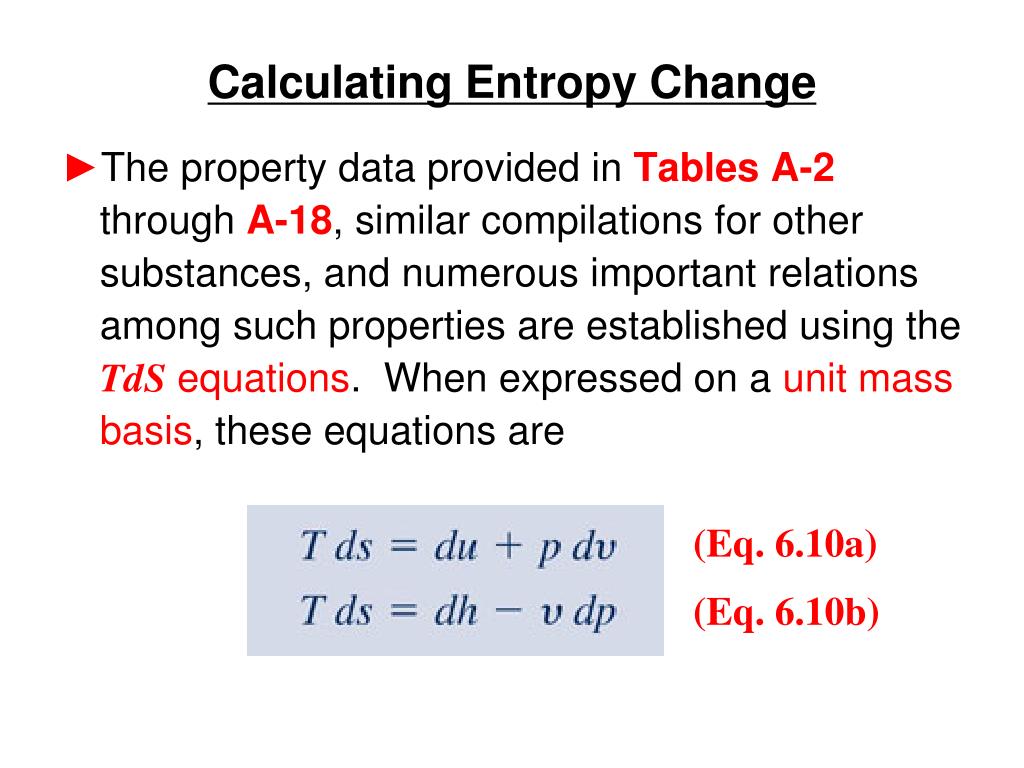

Since entropy is a state variable, just depending upon the beginning and end states, these expressions can be used for any two points that can be put on one of the standard graphs.The popular literature is littered with articles, papers, books, and various & sundry other sources, filled to overflowing with prosaic explanations of entropy. Using the ideal gas lawīut since specific heats are related by C P = C V + R.

This is a useful calculation form if the temperatures and volumes are known, but if you are working on a PV diagram it is preferable to have it expressed in those terms. Making use of the first law of thermodynamics and the nature of system work, this can be written With kT/2 of energy for each degree of freedom for each atom.įor processes with an ideal gas, the change in entropy can be calculated from the relationship This gives an expression for internal energy that is consistent with equipartition of energy. Then making use of the definition of temperature in terms of entropy: Expanding the entropy expression for V f and V i with log combination rules leads toįor determining other functions, it is useful to expand the entropy expression using the logarithm of products to separate the U and V dependence. One of the things which can be determined directly from this equation is the change in entropy during an isothermal expansion where N and U are constant (implying Q=W). The entropy S of a monoatomic ideal gas can be expressed in a famous equation called the Sackur-Tetrode equation. Entropy of an Ideal Gas Entropy of an Ideal Gas

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed